For the first time, the United States has included artificial intelligence in the scope of criminal investigation, and relevant regulation is imminent

ai regulation news

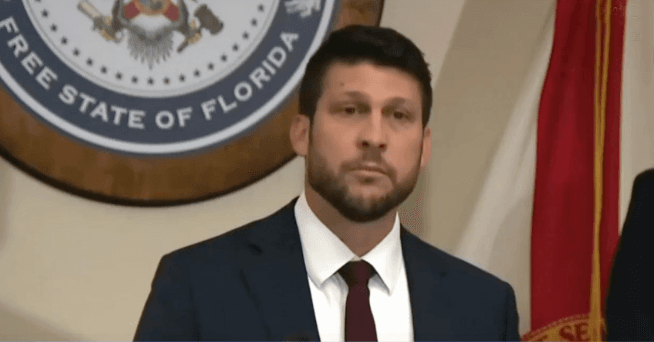

On April 21st, local time, James Ussmer, the prosecutor of Florida, announced,

A criminal investigation has been officially launched against the American artificial intelligence company OpenAI and its chatbot program ChatGPT,

It was claimed that it had a potential role in the April 2025 shooting incident on the campus of Florida State University.

This case marks the first instance in the United States where artificial intelligence has been incorporated into a criminal investigation.

James Ussmayer, prosecutor of Florida, USA

Florida launches criminal investigation into ChatGPT over school shooting, marking the first AI-related criminal case in the United States, drawing attention

According to the Florida State Attorney's Office, Phoenix Icner, a student at Florida State University, committed a gun attack on campus, resulting in two deaths and six injuries.

Investigations revealed that Ikerna had multiple exchanges with ChatGPT before committing the crime.

Prosecutor James Ussmer pointed out at the press conference that ChatGPT provided the gunman with "important clues for committing the crime",

Including recommending suitable weapons and ammunition, selecting the timing and location of the crime, etc., in order to maximize the casualties caused by the attack.

Usmail emphasized that this case highlights the serious risk of AI technology being abused.

He said, "If the person on the other end of the screen is a human, we will surely prosecute them for homicide.".

Of course, ChatGPT is not a person, but this does not exempt our inspection team from investigating whether the company bears criminal responsibility. ”

In response to the allegations, OpenAI stated that ChatGPT was not responsible for this "horrific crime".

The company stated that ChatGPT only provided "factual answers" to the questions raised by Ikerna, and did not actively incite or assist in committing crimes.

OpenAI also revealed that upon learning of the attack, it had proactively submitted relevant data regarding Icna to law enforcement authorities.

However, this case still triggered profound public concerns about the regulation of artificial intelligence technology.

Legal experts point out that with the rapid development of artificial intelligence technology, the risk of it being used for criminal activities has become increasingly prominent, and there is an urgent need to establish corresponding legal frameworks and regulatory mechanisms.

This case undoubtedly sounds an alarm for global AI governance, prompting all sectors to re-examine the ethical boundaries and legal responsibilities of AI technology.

Artificial intelligence pioneer Hinton: Concerns exist over the development of superintelligent AI, and global supervision is urgently needed to strengthen

Balancing technological innovation in artificial intelligence with risk prevention and control has become a major issue faced by both the global technology and legal communities.

On the 21st, local time, at the Digital World Congress 2026 held in Geneva, AI pioneer Geoffrey Hinton issued a strong warning,

The prospect of coexistence between humans and superintelligent AI remains unclear, necessitating the urgent strengthening of global regulatory frameworks and ethical safeguards.

Geoffrey Hinton

Hinton, a Canadian scientist, was awarded the 2024 Nobel Prize in Physics for his pioneering work in the field of artificial intelligence. On April 21st, Hinton told the attendees via video link that although AI has shown great potential to enhance productivity in areas such as healthcare, its impact on the job market cannot be ignored. He particularly pointed out that in positions such as call centers, AI has been able to match or even surpass human performance, and with technological advancements, this trend will accelerate and spread to more intellectual labor fields.

Hinton said with a heavy heart, "We are creating a technology that may replace all human intellectual work, and the newly created jobs will also be completed by artificial intelligence at a lower cost. What is even more worrying is that we are not sure whether we can coexist peacefully with super intelligent artificial intelligence."

Hinton's warning is not baseless. He resigned from his senior position at Google in 2023 to protest the company's neglect of AI risks. This time, he once again emphasized that the current global discourse on AI focuses too much on technological progress and commercial applications, while ignoring its profound impact on areas such as the labor market, social inequality, and public services. Moreover, it is simply crazy that only a very small amount of resources are invested in research to ensure the safety of AI.

He suggested that at least 1% of the global funding for AI research should be allocated to developing safety mechanisms to ensure that technological development does not deviate from human control. At the same time, he emphasized the importance of international cooperation, arguing that only through global joint efforts can we effectively address the challenges posed by AI.